Ubuntu 26.04 LTS “Resolute Raccoon” was released April 23, 2026. It is supported until April/May 2031 as a normal LTS, with longer coverage available through Ubuntu Pro.

Ubuntu 26.04 LTS “Resolute Raccoon” was released April 23, 2026. It is supported until April/May 2031 as a normal LTS, with longer coverage available through Ubuntu Pro.

This is not just another GNOME wallpaper release. 26.04 moves Ubuntu onto Linux 7.0, GNOME 50, a Wayland-only GNOME session, newer virtualization tooling, newer OpenSSH, Dracut by default, APT 3, systemd 259, and a lot of hardware enablement work.

The big shifts (quick version)

- Kernel 7.0 → new hardware baseline

- GNOME 50 → faster, cleaner desktop

- Wayland-only GNOME → Xorg is no longer an option there

- Stronger virtualization stack → actually useful for real workloads

- Modern GPU + CPU support → Intel Xe, Arc, newer AMD, better NVIDIA

Desktop changes (what you’ll actually notice)

Wayland is no longer optional in GNOME. There is no Xorg GNOME session anymore. X11 apps still work via XWayland, and other desktops can still use Xorg.

GNOME 50 is a real upgrade:

- better responsiveness

- lower CPU/memory use in core components

- improved fractional scaling, VRR, HDR

- better remote desktop

Default apps got cleaned up:

- Papers (PDF) replaces Evince

- Loupe replaces Eye of GNOME

- Ptyxis replaces GNOME Terminal

- Resources replaces System Monitor

- Showtime replaces Totem

Less legacy, more consistency.

Hardware / kernel

Linux 7.0 brings:

- Intel Core Ultra (new gens) support

- Intel Xe2/Xe3 graphics

- new Intel Arc GPUs (consumer + pro)

- improved AMD/Intel video acceleration (VA-API on by default)

Power management is better too:

- improved power-profiles-daemon (especially AMD laptops)

- NVIDIA Dynamic Boost enabled where supported

Server / virtualization (quietly one of the best parts)

- New HWE virtualization stack (qemu/libvirt/etc.)

- AMD SEV-SNP + Intel TDX

- better NUMA + PCI affinity

- improved virtio / multiqueue

- NVMe support in libvirt

- NVIDIA MIG support

This is a very solid base for KVM hosts.

The uutils (Rust coreutils) situation

Now the controversial bit.

Ubuntu 26.04 experiments with uutils (Rust-based coreutils) in some parts of the system. This is not a full replacement for GNU coreutils across the board, but it is present enough to matter.

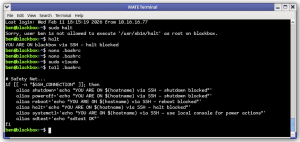

What’s the concern?

- uutils is not 100% behavior-compatible with GNU coreutils

- edge cases and scripting differences do exist

- subtle breakage is possible, especially in older or non-trivial scripts

This is not theoretical — this is exactly the kind of change that can bite admins who assume “ls/cp/mv behave exactly the same everywhere.”

Reality check

- Most normal users won’t notice

- Most simple scripts will work fine

- Advanced scripts, weird flags, or strict POSIX/GNU assumptions may break

What you should do

If you care about consistency:

- explicitly depend on GNU coreutils in scripts when it matters

- test anything non-trivial before rolling into production

- consider pinning or reinstalling GNU coreutils behavior if needed

- avoid assuming behavior based on older Ubuntu/Debian systems

If you’re running servers, especially automation-heavy ones, this is worth paying attention to.

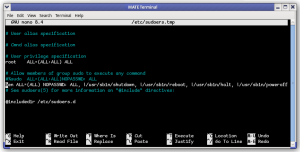

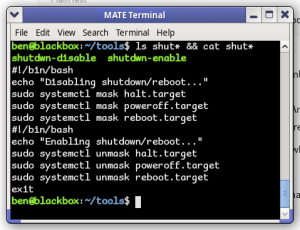

Other admin-facing changes

- OpenSSH 10.2 (older crypto removed / tightened defaults)

- Chrony replaces systemd-timesyncd

- Dracut replaces initramfs-tools (initramfs-tools still available)

- APT 3, apt-key fully gone

- systemd 259 (SysV compatibility nearing end-of-life)

LTS support status

- 26.04 → supported to May 2031

- 24.04 → supported to May 2029

- 22.04 → supported to May 2027

- 20.04 → now ESM only

Bottom line

26.04 is a baseline shift release, not just an incremental one:

- Wayland is now reality

- GNOME is modernized

- kernel + hardware support jumped forward

- virtualization is significantly better

- and yes — there are some experimental changes (like uutils) that deserve caution